hi Kyle,

The update to set WebRequest.ReadWriteTImeout to 30000ms was deployed 03/21/2023 at 0845hours CST. Our proxy server has not had any issues since then. It’ll have to run 2-3 weeks before I’d be comfortable calling this the fix. Only once or twice, since this issue surfaced, has the proxy server run barely two weeks without an issue.

In the meantime, I’ve been testing our providers WMS with a test-bed application that I have, which goes directly to the providers URL, it does not use our proxy server. It has encountered errors on several occasions. If quickly/continuously panning/zooming both timeout and 502 Bad Gateway errors are encountered if one is persistent, which I am. I’ll email you the log and screen-capture. The test-bed application takes the default on the WebRequest Timers. Seeing these errors tells me that there is something amiss in their network somewhere. They are using AWS. I can recreate these errors with the test-bed application on both the server on which our proxy server is running and on my development network, which is in another State.

I installed DebugView on the proxy server 03/25/2023 and have not seen any errors in either the system or logged in DebugView.

You’re correct in that if the image is retrieved from the Tile Cache, then a SendingWebRequest is not invoked. We do have a Tile Cache, so the log makes sense.

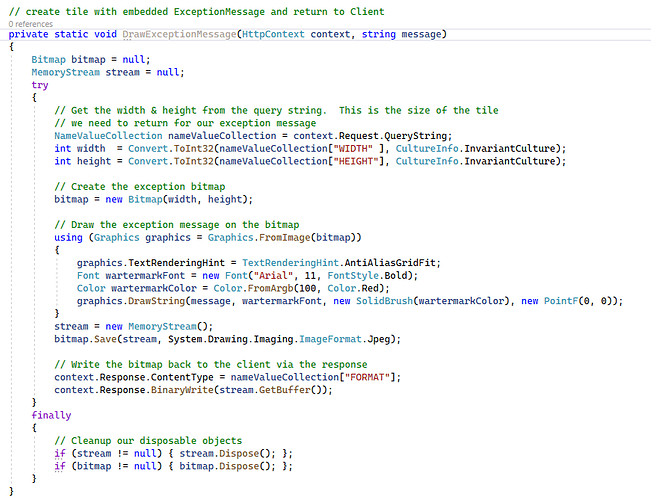

Yes, GetMapCore does indeed invoke the base.GetMapCore. Prior to that it is doing some error checking, adding Parameters for the URL, and logging. There are no try/catch sequences so I may add a couple just to be safe.

Thanks,

Dennis